Outsourcing new CPU scores for lobbies

-

Generally i woul'd never advertise UserBenchmark.com, because they are extremly biased against newer Ryzen CPUs, but can you execute their Benchmark and check how your RAM compares? It will tell you how well other people with the same hardware did. We need to find out if your RAM is actually slow for some reason or if there is a problem with the new RAM banchmark.

-

UserBenchmarks: Game 8%, Desk 65%, Work 7%

CPU: Intel Core i7-3740QM - 71.9%

GPU: Nvidia GTX 660M - 7.6%

SSD: Intel 520 Series 120GB - 50.9%

HDD: Seagate ST2000LM015-2E8174 2TB - 60.1%

RAM: Unknown 78.C2GCN.B730C 2x8GB - 55%

MBD: Clevo W3x0ETI understand that 1600MHz DDR3 is outdated, but my config is not subpar and doesn't deserve 330 pts. Can we make a test round of Gap or similar map with some of the admins?

-

Ok well there is no data for this RAM in the Userbenchmark database, so that doesn't give us any information sadly.

-

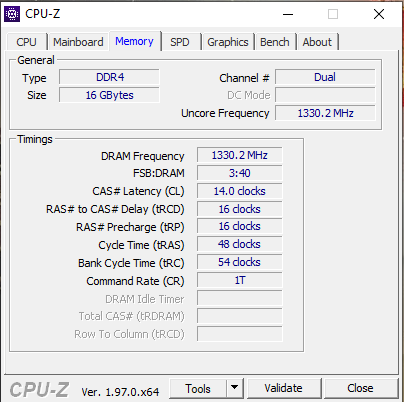

You have the speed and timings, pretty much all you need to compare ram speed.

-

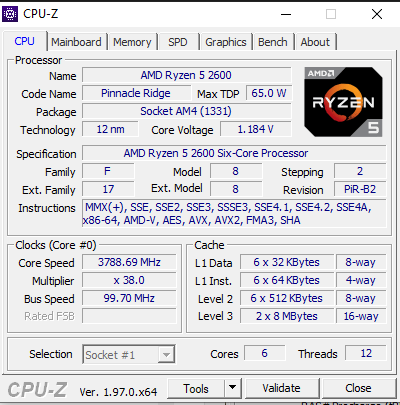

CPU: Intel I7-7700

MB ASUS Z270 TUF 2

RAM : 16 GB DDR4

Integrated graphic

Old CPU: 141

New CPU: 273

No Lag in game -

Yeah but the question is, why are some new scores so bad? Does the new benchmark heavily tax smaller CPU cache sizes or something? That would explain it maybe?

In my opinion it would be a bit extreme to have CPUs with 6-8MB L3 cache have so much worse scores, if that is the case (Ryzen 3xxx has 16MB per CCX, modern i7 like 20MB).

Or does it maybe just measure bandwith? Thats would be only half of the story, latency can be just as important.

Of course DDR3 has much less bandwith, but it does not have worse latency than DDR4, which is probably kinda more important than bandwith.

We need some information what exactly the new RAM benchmark is measuring.

-

Here you can see what it does: https://github.com/FAForever/fa/blob/741febf45a165e257db972fc2104484a51dd799d/lua/ui/lobby/lobby.lua#L5228

-

@giebmasse said in Outsourcing new CPU scores for lobbies:

Here you can see what it does: https://github.com/FAForever/fa/blob/741febf45a165e257db972fc2104484a51dd799d/lua/ui/lobby/lobby.lua#L5228

Thanks.

@Uveso

I am going to use object sizes from https://wowwiki-archive.fandom.com/wiki/Lua_object_memory_sizes, which is a different Lua version, but hopefully not too far off.Ok so if i understand this correctly, this is what the memory benchmark is basically doing (all of the rest seems to be the CPU benchmark, the code below was added by Uveso in his first commit before the CPU benchmark was added back):

var foo = "123456789"; // reference to a 17 (base Lua string size) + 9 byte string var table = {} // dictionary, 40 Bytes per key hash for (1..25 in 0.0008 increments) { table[toString(index)] = foo // a 32bit reference is assigned }So we have a loop with 30k iterations, that assigns the same reference to 30k different hashes, which all are strings converted from floats.

Tables in lua contain hashes that are 40 bytes each, so the total size of the table (not counting any additional bookkeeping structure) is (hash=40 + ref=4) *30k = 120k Bytes, or 120 KB.

So there are a three problems with this benchmark if my understanding is correct:

-

Even if we assume that the Table is 4 times as big as the hashes/references it contains, we still only end up with a memory size of 480KB. This fits entirely in the L2 cache (which is generally going to be around 1 MB), so we are potentially not even filling the L3 cache. I don't know how the Lua interpretation works exactly, but i would be surprised if the CPU even needed to hit RAM at all during this function.

-

Converting floating point numbers to strings is VERY tricky. If not optimized specifically (in C preferably), it can eat up A TON of performance. Now im assuming that

tostringis an optimized function, but that whole benchmark could end up just measuring the time it takes fortostringto execute, instead of having anything to do with memory, even if that function is optimized. -

We are using a dictionary, so the values are going to be sorted by hash. All in all there could be a lof of table indirection and bucket creation going on, so the benchmark might measure the insert performance of Lua tables and hashing, instead of doing anything with RAM.

So best case scenario is that this is measuring L2 cache, but it might not do that at all. Now i don't know a lot about how Lua interpretation works, so if there are any errors in my logic i welcome everybody to tell me.

Of course it is much easier to critisize benchmarks than to make good ones, so i think its very good that you guys implemented something that doesn't just measure arithmetic operations.

-

-

In my mind it would be better to

- either make the table much bigger (and NOT measure the table creation) and then measure the inserts / reads on that table

- save randomized objects inside the table that are much bigger than strings (again, not measung creation time of table and objects), and then do reads into parts of the object to pull the objects into the CPU cache (which would push parts of the table outside of the cache if you read enough objects.)

-

I thought memory is allocated / synced with RAM, regardless if it fits the cache. Do you have a source to read up on?

-

Yeah but if you let the CPU decide to sync the cache whenever it wants (because you don't put pressure on the cache), it might do it after the benchmark ends, or god knows when. But even if it would work, you would only measure RAM write, never read.

You can force the CPU to pull things into the cache that i doesn't have yet in the cache (= cache miss) really only by randomly demanding from the CPU to give you content of memory addresses over a larger area of memory than what would fit into cache.

I have no source on this, is just pick up tidbits here and there, like links from SO like https://stackoverflow.com/questions/8126311/what-every-programmer-should-know-about-memory or just youtube videos. Problem is i have never implemented a interpreted language nor my own HashTable, so i have to make a bunch of assumptions about how tables in Lua work and my understanding is definitely far from complete.

Measuring computer performance is fucking hard xD

-

I vaguely recall the old score being about 120. Now it's reading 108 to 102.

It's a 5600X with 3600 ram. I have the fclock at 1800 (max that that this Zen generation can stablely go). Nothing is traditionally OCed since you don't do that with Zen CPUs.

-

My score is doubled after this update. CPU is Intel I7 7700 with DDR4 RAM. The gamers should trust CPU scores and I'm proposing to do next :

- Use Zlo performace test is present at https://forum.faforever.com/topic/328/cpu-performance-tests

- Check CPU scores

- Prepare some information about hardware.

GOAL: Build a strong relation between CPU score and the test replay time.

===================

My CPU score now is : 270 (after overclocking in BIOS (memspeed up) : 273)

My replaytime of the zlo replay: 18:15 sec

===================

Hardware

CPU: Intel I7-7700

MB ASUS Z270 TUF 2

RAM : 16 GB DDR4

Integrated graphic

-

What was / is your CPU score?

-

@jip I had 141 , I have now 270 . Also I have tried to overclock PC a little and 've got ... 273

-

FX-8350 @ 4ghz

32gb DDR3 (1600mhz)

old: 245

new: 325 !!!

Now I can't play. I simply get kicked from games. -

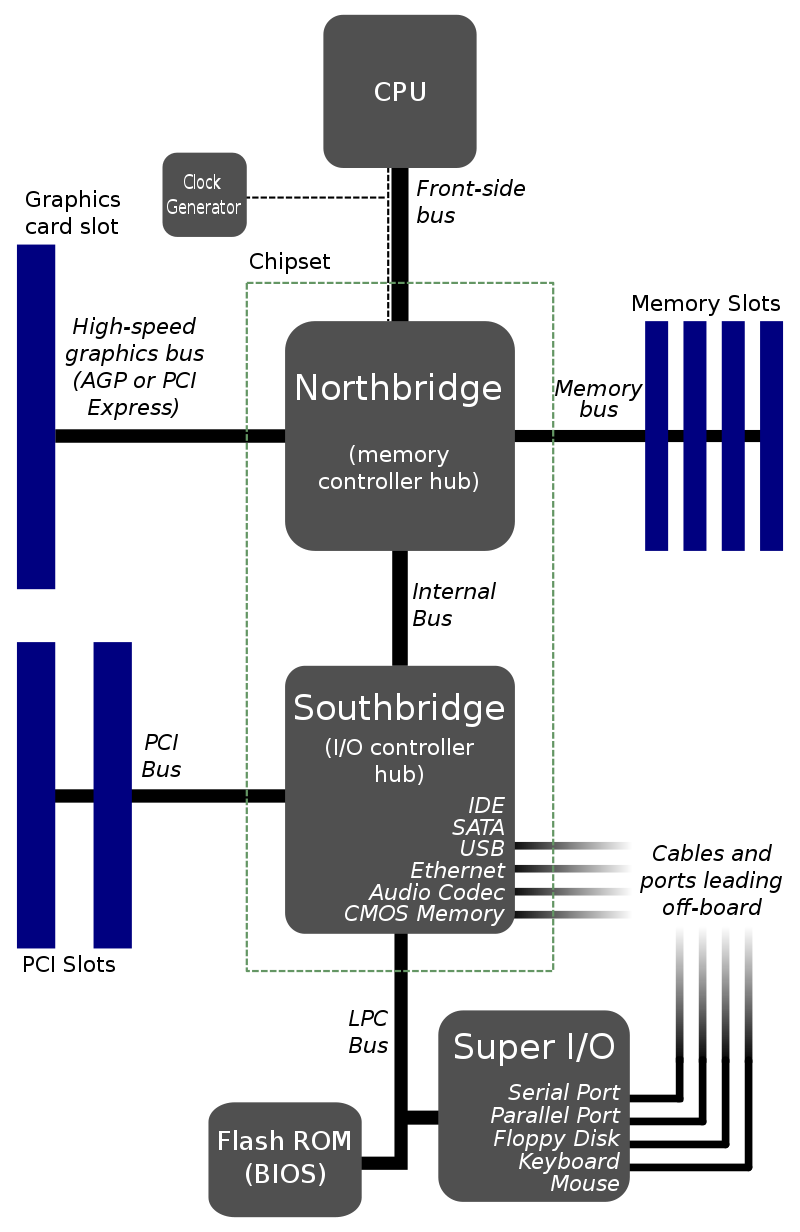

I investigate the benchmark issue since 2018:

Postby Uveso » 16 Feb 2018, 19:04

Maybe i am the only one with this opinion, but CPU power ist not the mainproblem.Why we have absurd fast CPU's for Supcom and still lag!?!

While having some PC here i did some testing.Surprise surprise, it's RAM speed.

For example; i changed from DDR3 to DDR4 RAM (I have a board with DDR3 and DDR4 slots).

The simspeed was increasing from +5 to +6

I got also good results when overclocking the HT-link (AMD -> connection between Northbridge and RAM) or overclocking the RAM itself (low CAS Latency, 4xBank Interleaving, reduced refresh cycle(yeah, i know, i know... ))

))Maybe we should try to not waste too much LUA memory in huge arrays

(Memory use from big unit models, LOD settings etc are not influencing the game speed)Some benchmarks to compare:

18328 QuadCore Q8400 @ 2,5GHz

18543 FX-8150 @ 4,2GHz

18545 PhenomII-X4-955 @ 3,8GHz / 200MHz

18547 PhenomII-X4-955 @ 3,8GHz / 211MHz

18559 PhenomII-X4-955 @ 4,0GHz / 200MHz

18576 PhenomII-X4-955 @ 4,2GHz / 205MHz

18585 PhenomII-X4-955 @ 4,1GHz / 200MHz NB 2200

18589 PhenomII-X4-955 @ 4,0GHz / 200MHz NB 2400

18595 PhenomII-X4-955 @ 4,1GHz / 200MHz NB 2400

18645 i7 6700K @ 4.4GHz

18708 i7 4790K @ 4.4GHz

And some words about the memspeed and "overclocking" with lower benchmark sesults:

by Uveso » 07 Mar 2020, 04:06

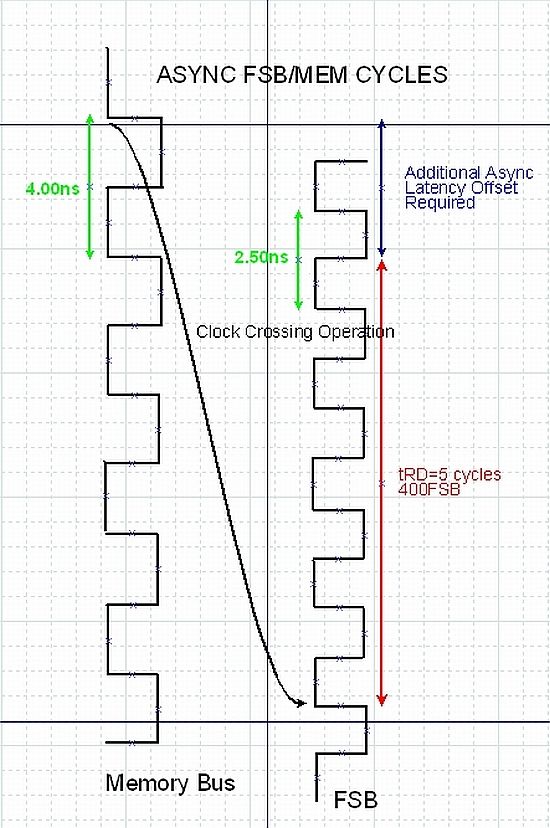

ZLO_RD wrote: Edit: lol this test is kinda meaningless xD. 3000 beats 3200 with same timings. 2133 has good timings and no latency data, so can't compare results.Yes, this happens if ppl dont know how a PC works and try to overclock.

If you change the speed of your memory then you also need to set the speed from your front side bus (mainboard clock)

This is needed to set the clock for the mainboards north bridge.North Bridge is the memory manager/controller:

Unsync memory/NorthBridge clock settings will lead to very low memory latency:

You can see here that you will lose about 20% memory speed (Additional Async Latency Offset)

So its no surprise to have fast benchmark results with 3000 clock because 2133 and 3200 are set unsync to North Bridge.

Have in mind we need fast memory "latency" for SupCom, we don't need a high memory clock or high memory throughput.

-

I get that it is really to hard to make a proper benchmark. But this kind reads like you just created some code for the benchmark more or less by chance and the numbers lined up on 4 PCs and thats it. We have no idea what this benchmark is really measuring, and the spikes by people that seem to have normal RAM tell me that his benchmark is neither measuring RAM bandwidth nor latency.

Maybe Supcom is really dependent on caches and maybe the code is measuring L2 and MAYBE thats a good thing, but it seems to break down for a bunch of systems obviously. If those systems are not actually as slow as the benchmark indicates, we should tweak it, and making sure it actually is liekly to hit RAM seems like a good tweak to me.

Even if each table in the actual game fits in cache, the game will use all of them per tick (causing cache pressure), so we cannot use a single table that has typical game size if the game uses a whole bunch of them.

Maybe i can write up an idea later.

-

I have written something that we could maybe use as a base for a better memory benchmark:

https://gist.github.com/Katharsas/89b0e12b3cc751a51d0c278421d6599aI have tried to not run into any of the problems described in my first post. I have taken care not to call the random function inside hot loops in case that this is a slow function.

It consists of 2 benchmark functions operating on a previously filled table. Based on the assumptions about Lua object sizes, it should have a minimum memory size of about 50MB. The Lua interpreter that i used in VSCode will take up 220MB after the table is filled with objects by the setup functions.

I have adjusted the iterations so that each benchmark runs in about 2 seconds in my machine. Now, i don't know how Lua works in the game, so im not sure if this could be ported to the FAF Lua benchmark. It would obviously require normalization / resscaling so that we get numbers that are in a usefull range. However i cannot really see in the FAF source code how the number that we see at the end is calculated and how the time is measured.

-

I've noticed many laptop CPU's stay in their power saving mode when running the newer test (more than before). Would explain some of the spikes.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login