@jip I had 141 , I have now 270 . Also I have tried to overclock PC a little and 've got ... 273

Outsourcing new CPU scores for lobbies

FX-8350 @ 4ghz

32gb DDR3 (1600mhz)

old: 245

new: 325 !!!

Now I can't play. I simply get kicked from games.

I investigate the benchmark issue since 2018:

Postby Uveso » 16 Feb 2018, 19:04

Maybe i am the only one with this opinion, but CPU power ist not the mainproblem.

Why we have absurd fast CPU's for Supcom and still lag!?!

While having some PC here i did some testing.

Surprise surprise, it's RAM speed.

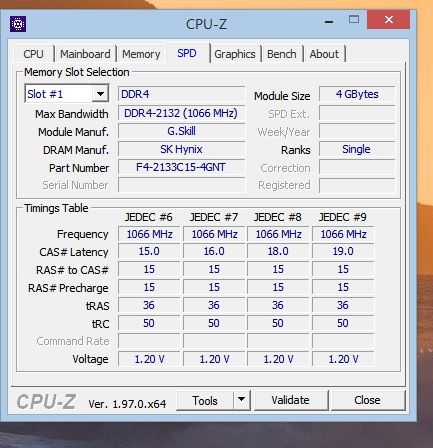

For example; i changed from DDR3 to DDR4 RAM (I have a board with DDR3 and DDR4 slots).

The simspeed was increasing from +5 to +6

I got also good results when overclocking the HT-link (AMD -> connection between Northbridge and RAM) or overclocking the RAM itself (low CAS Latency, 4xBank Interleaving, reduced refresh cycle(yeah, i know, i know...  ))

))

Maybe we should try to not waste too much LUA memory in huge arrays

(Memory use from big unit models, LOD settings etc are not influencing the game speed)

Some benchmarks to compare:

18328 QuadCore Q8400 @ 2,5GHz

18543 FX-8150 @ 4,2GHz

18545 PhenomII-X4-955 @ 3,8GHz / 200MHz

18547 PhenomII-X4-955 @ 3,8GHz / 211MHz

18559 PhenomII-X4-955 @ 4,0GHz / 200MHz

18576 PhenomII-X4-955 @ 4,2GHz / 205MHz

18585 PhenomII-X4-955 @ 4,1GHz / 200MHz NB 2200

18589 PhenomII-X4-955 @ 4,0GHz / 200MHz NB 2400

18595 PhenomII-X4-955 @ 4,1GHz / 200MHz NB 2400

18645 i7 6700K @ 4.4GHz

18708 i7 4790K @ 4.4GHz

And some words about the memspeed and "overclocking" with lower benchmark sesults:

by Uveso » 07 Mar 2020, 04:06

ZLO_RD wrote:

Edit: lol this test is kinda meaningless xD. 3000 beats 3200 with same timings. 2133 has good timings and no latency data, so can't compare results.

Yes, this happens if ppl dont know how a PC works and try to overclock.

If you change the speed of your memory then you also need to set the speed from your front side bus (mainboard clock)

This is needed to set the clock for the mainboards north bridge.

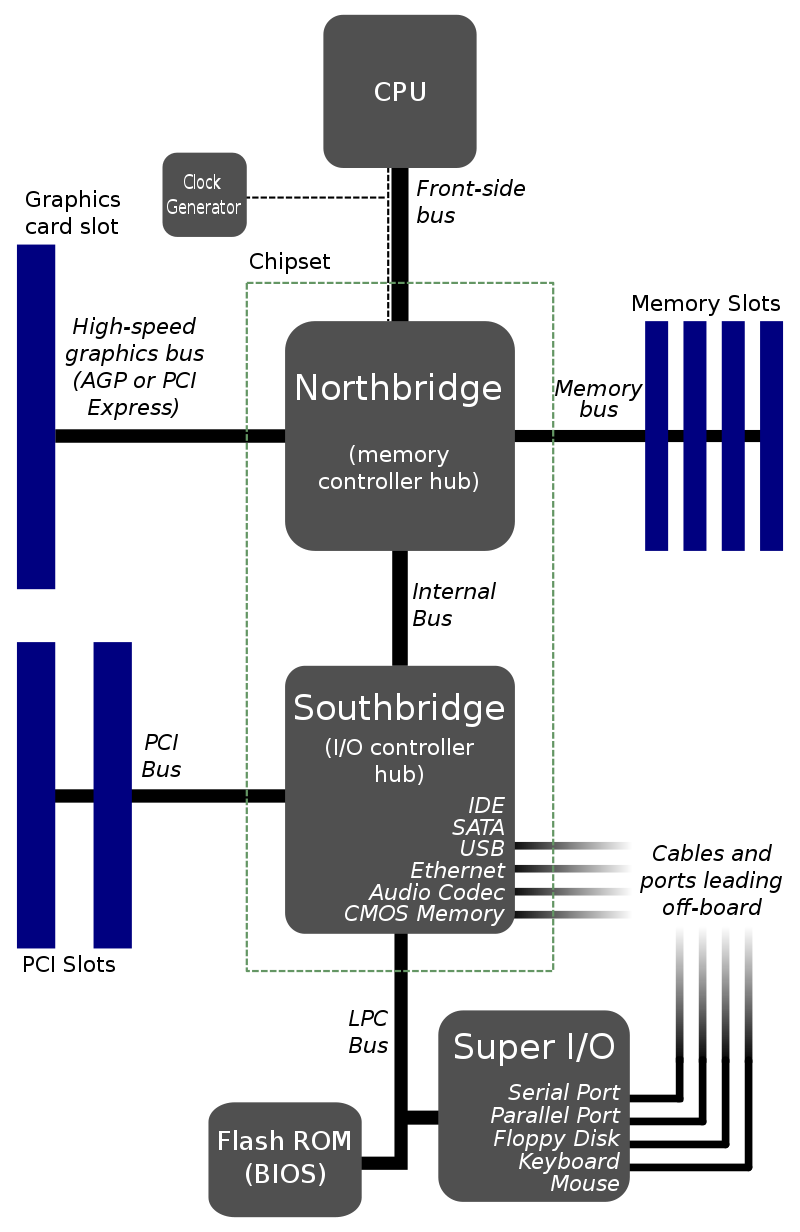

North Bridge is the memory manager/controller:

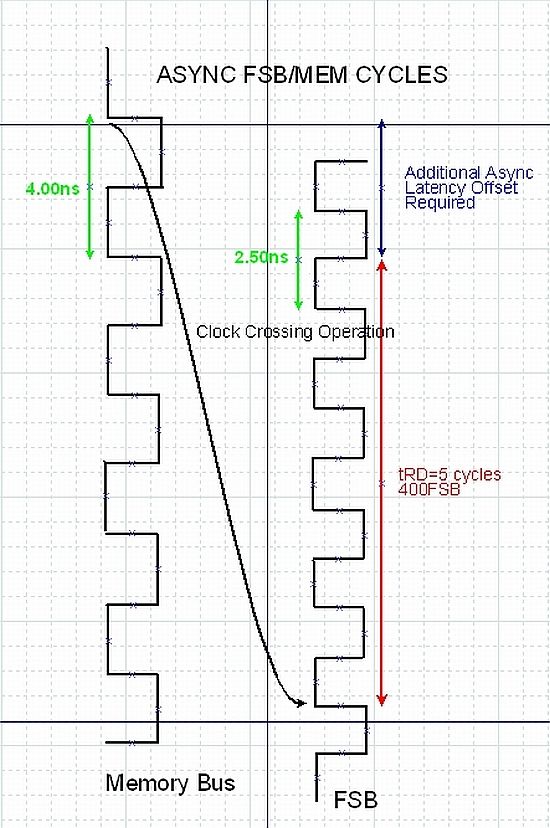

Unsync memory/NorthBridge clock settings will lead to very low memory latency:

You can see here that you will lose about 20% memory speed (Additional Async Latency Offset)

So its no surprise to have fast benchmark results with 3000 clock because 2133 and 3200 are set unsync to North Bridge.

Have in mind we need fast memory "latency" for SupCom, we don't need a high memory clock or high memory throughput.

I get that it is really to hard to make a proper benchmark. But this kind reads like you just created some code for the benchmark more or less by chance and the numbers lined up on 4 PCs and thats it. We have no idea what this benchmark is really measuring, and the spikes by people that seem to have normal RAM tell me that his benchmark is neither measuring RAM bandwidth nor latency.

Maybe Supcom is really dependent on caches and maybe the code is measuring L2 and MAYBE thats a good thing, but it seems to break down for a bunch of systems obviously. If those systems are not actually as slow as the benchmark indicates, we should tweak it, and making sure it actually is liekly to hit RAM seems like a good tweak to me.

Even if each table in the actual game fits in cache, the game will use all of them per tick (causing cache pressure), so we cannot use a single table that has typical game size if the game uses a whole bunch of them.

Maybe i can write up an idea later.

I have written something that we could maybe use as a base for a better memory benchmark:

https://gist.github.com/Katharsas/89b0e12b3cc751a51d0c278421d6599a

I have tried to not run into any of the problems described in my first post. I have taken care not to call the random function inside hot loops in case that this is a slow function.

It consists of 2 benchmark functions operating on a previously filled table. Based on the assumptions about Lua object sizes, it should have a minimum memory size of about 50MB. The Lua interpreter that i used in VSCode will take up 220MB after the table is filled with objects by the setup functions.

I have adjusted the iterations so that each benchmark runs in about 2 seconds in my machine. Now, i don't know how Lua works in the game, so im not sure if this could be ported to the FAF Lua benchmark. It would obviously require normalization / resscaling so that we get numbers that are in a usefull range. However i cannot really see in the FAF source code how the number that we see at the end is calculated and how the time is measured.

I've noticed many laptop CPU's stay in their power saving mode when running the newer test (more than before). Would explain some of the spikes.

I usually force high-performance mode before I start, tried to run many kinds of benchmarks before CPU test.

Now I randomly got a 260 rating and I'm not doing any new CPU tests

Just to note: before that I had stable 250 rating even in power-saving and balanced modes, and gameplay was normal until late-game, if I accidentally put it in power-saving.

I think we can conclude that one reason for this test to trigger higher scores is because it doesn't cause laptops to go into high performance mode. To me, that sounds like a good thing - as typically a laptop can't sustain that for too long when the GPU is involved.

A work of art is never finished, merely abandoned

It isn't really a good thing, in all cases I've seen the power saving mode the laptops are in do not reflect the true performance of the laptop in-game, as in the power saving is much lower clock speed even compared to a potentially throttling laptop. Scores between 350 and even 1750, when the laptop true performance would've been somewhere around 250ish or so.

Well I just made a few runs with my laptop and surprisingly it's actually scoring better than previously.

Old score was jumping around 185-187, new one seems to be landing at average at 174 mark though depending on run it goes as low as 168 , with the worst run scoring 178.(*funnily enough it was the first run)

That was while having the laptop run on half empty battery in performance mode. There's also little bit more bloat compared to the runs done on the old benchmark but it seems like the new one doesn't care about it.

I also agree with Gieb that it's clearly not a good thing if the benchmark can't reliably tell you the expected performance from the players PC.

I wasn't referring to power saving mode - I was referring to not being in high performance mode. There is a middle ground, and that is exactly what we want to benchmark in my opinion. At least for my own laptop, it hits high performance mode for the first 15 minutes or so and then it throttles back into 'regular mode'. A typical laptop can not reliably perform at its peak performance - it isn't uncommon that people complain about laptop users.

Lenovo y-50

16GB ram

i7-9750H

before: 160 (regular mode, as supreme commander in the lobby doesn't trigger it)

after: 141 with high performance mode because of a single-core background task, 225 on 'regular mode'.

Lets gather more information at least - keep the scores coming.

A work of art is never finished, merely abandoned

I went from 181ish to 140 on my labtop. Will look for additional data in a moment on my labtop.

I’m a shitty 1k Global. Any balance or gameplay suggestions should be understood or taken as such.

Project Head and current Owner/Manager of SCTA Project

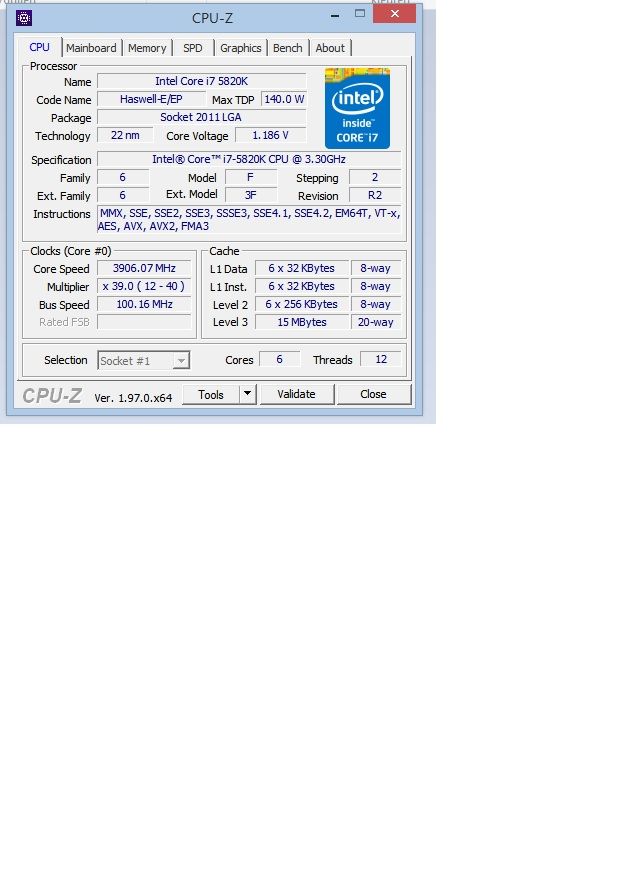

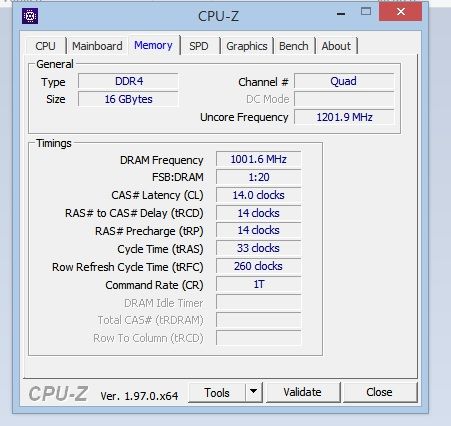

I've been playing this game for a few months now and never had any issues. However from patch 3723 my cpu score went from 180 to 359.

It is hard for me now to join any games, most of the times i get kicked because of this.

intel i7 5820 , 16 Gb DDr4 , nvidia GTX970

Please restore the old cpu score!

Just to ask, did you run the laptop in performance/high power mode when running the benchmark?

@triple-x1

@giebmasse Oh yes, you are right. My sleepy mind was like, this numbers in cpu name are looking like the laptop SKU's so it must be a laptop.

It totally slipped my mind that the earlier I7 tended to have this kind on nomenclature compared to the current more streamlined line-up.

Looks like new CPU score is overcorrelated to the cache size. I have similar to Jip's CPU by performance (https://cpu.userbenchmark.com/Compare/Intel-Core-i7-9750H-vs-Intel-Core-i7-7700/m766364vs3887 ) but CPU scoring utulity is thinking that my CPU slower two times. I have checked around 1-15 online replays today (Dual gaps usually ) and never replay slow down to 0, sometimes to +1 . But I am kicked from lobby regularly with verdict bad CPU.

I think we've got enough data to make to ratify some form of change. I'm not sure what yet, but please keep more data coming.

A work of art is never finished, merely abandoned

Hello,

My test steps are :

- Install lua from https://github.com/rjpcomputing/luaforwindows/releases

- Use a short adoptation of the faf code from : https://pastebin.com/GUTBXF7a

I've add GetSystemTimeSeconds() + move out string with yield

and verify that code shows a strongly relation at my IMac 1492 and running time 1.5 sec (around)

CPU intel-core-i7-4980hq 16 DDR3 RAM

Windows machine has 1202 i7-7700

I have a question how is time is calculated in the lobby ? image url)

image url)